Real case example for designing the Conversational AI bot

Intro

In this article, I will try to describe designing the conversational AI in integration with back-end software.

Real example: Create a bot for adding time activities by employees. With time activity, you can specify the date, time spent on some task, assign it to a project or customer, and in addition to that, specify the hourly rate and if it is billable or not.

Step 1 - Define Intents

To design the intents in many cases, I am trying to communicate with the bot's future users. You can write a story and explain the purpose of your request to provide different examples of utterances (intents) that they can use in the bot for adding new time activity. This step aims to have real-time examples from the users, and it is not only for training the intents and getting higher confidence for it but also for designing the bot in the most appropriate way.

Of course, you can design the utterances on your own and start the design of the flow, but in the end, this could lead to additional work because you would need to rebuilt everything.

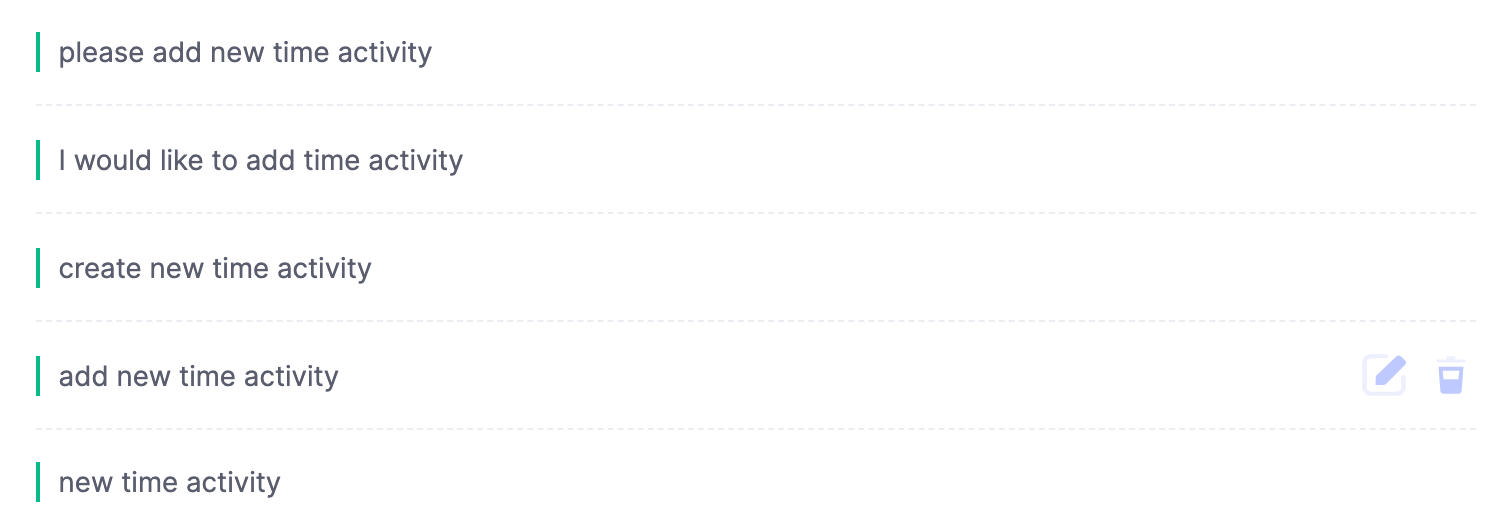

For example, you can design the next utterances (intents):

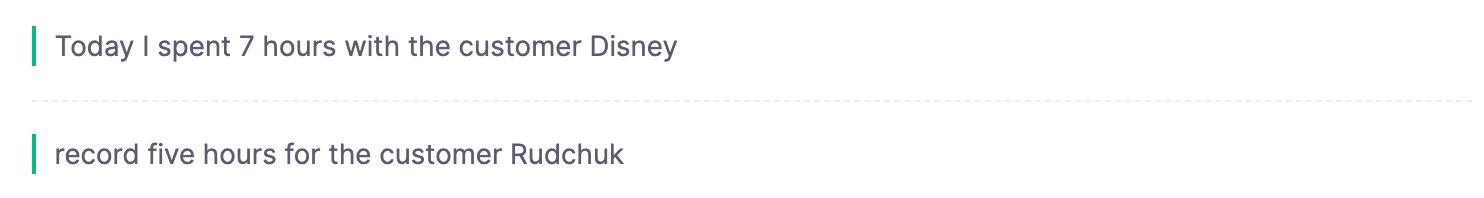

And when users start to use your bot, you will find that they will try to use other utterances, and in many cases, they will not be so simple as you have designed. They can look like this:

The above examples provide us a lot of insights. First of all, we found that the utterances include the context variables like "Today," "I," "7 hours", and customer "Disney." We need to process them automatically if the person wants to provide all the needed information in the initial message. In that case, we don't need to ask the user to provide information about the time activity's date, who performed the time activity, time spent, and the customer.

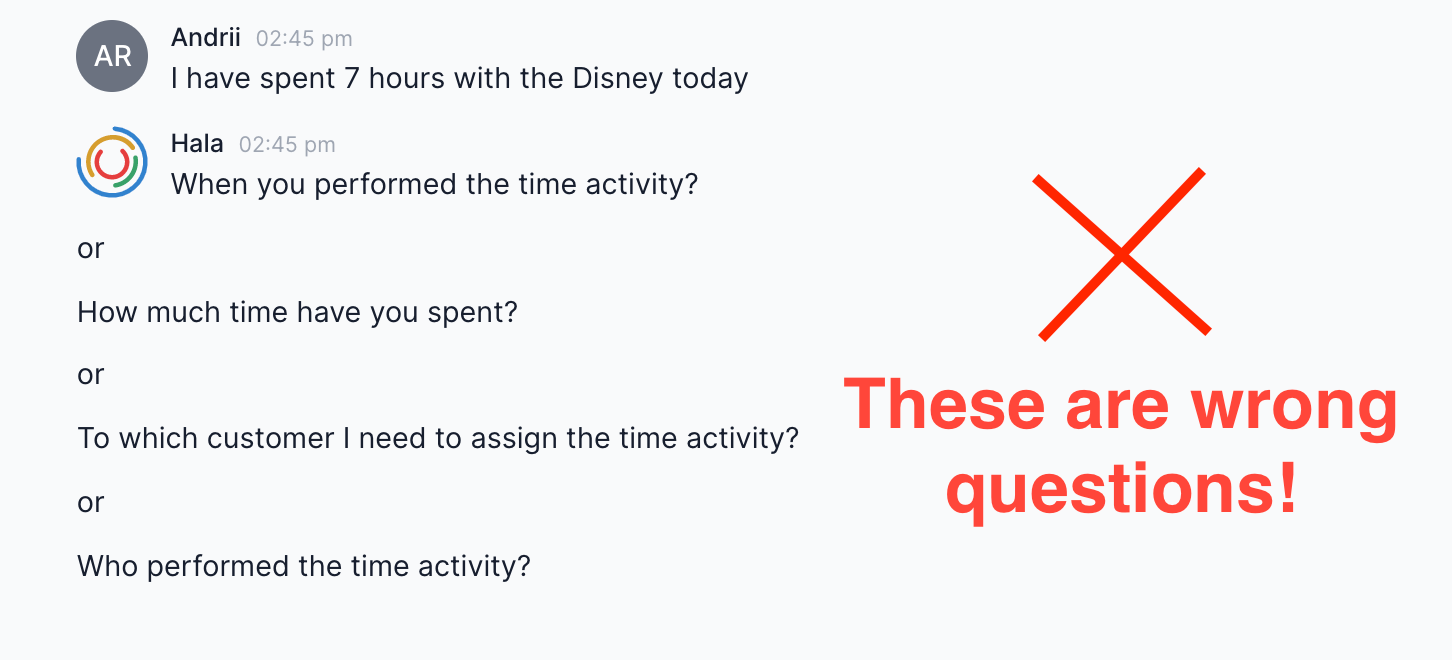

When I am saying we don't need to ask additional questions, I mean this:

You need to automatically recognize all the context variables and not ask unnecessary questions because it will lead to a bad user experience.

So now, when you have examples of different utterances, you can start designing the entities, named entity recognition, conversation flow, and integration with 3rd party software.

Step 2 - Define entities

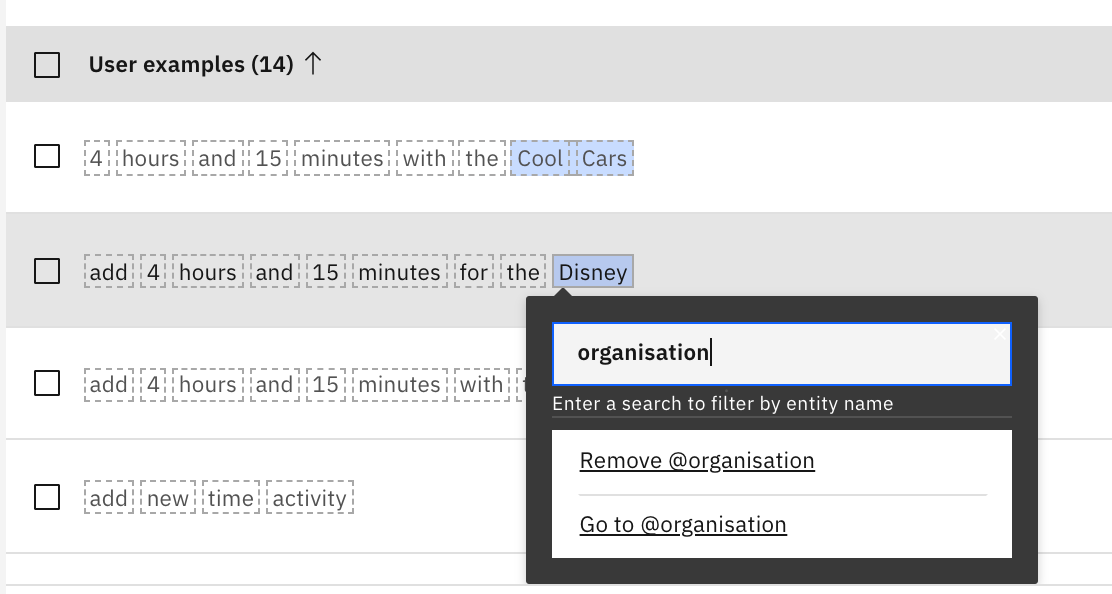

Based on the user's utterances examples, we can draft the first version of entities to recognize the context variables automatically. Some of them we can easily handle, some of them require more work.

Almost all platforms (IBM Watson Assistant, Google Dialogflow, Amazon Lex) that provide the functionality for developing the bots include the system entities for recognizing the Dates, Numbers, Time, Currency, etc.

You need to activate them, and your bot will recognize the next data: "Today," "7 hours," and potentially "Disney." The last one is not so clear because it is a well-known company and some platforms have the functionality to recognize the names of the organizations, some not. Also, you need to take into account that there are a lot of variations on the companies names in the world, it can look like this "Freezman sporting goods" or "Amy Rudchuk Company" and so on, in this case, the system entities will not recognize them or recognize in the wrong way.

Also, if we will think about such utterance from the user: "John Smith spent 7 hours with the Amy Rudchuk company today".

Where the "John Smith" is an employee of the company who performed the time activity and "Amy Rudchuk company" is the customer. This is a more complicated example because the system entity can return to you a few objects, for example, entity.person-John Smith and entity.person-Amy Rudchuk.

This the challenge that you can resolve with different options. It also depends on the platform you are using for bot development.

For example, in IBM Watson Assistant, you can find the functionality for Entity Annotations. For example, if you want Watson to understand all names, you would annotate names in at least ten examples. These might include sentences such as "My name is Mary Smith", or "They call me Joseph Jones". Select each name to highlight it, then create or select an entity called "name" from the popup. From now on, Watson will understand names it has never seen before, but that are used in similar sentences. You can read more about annotations here.

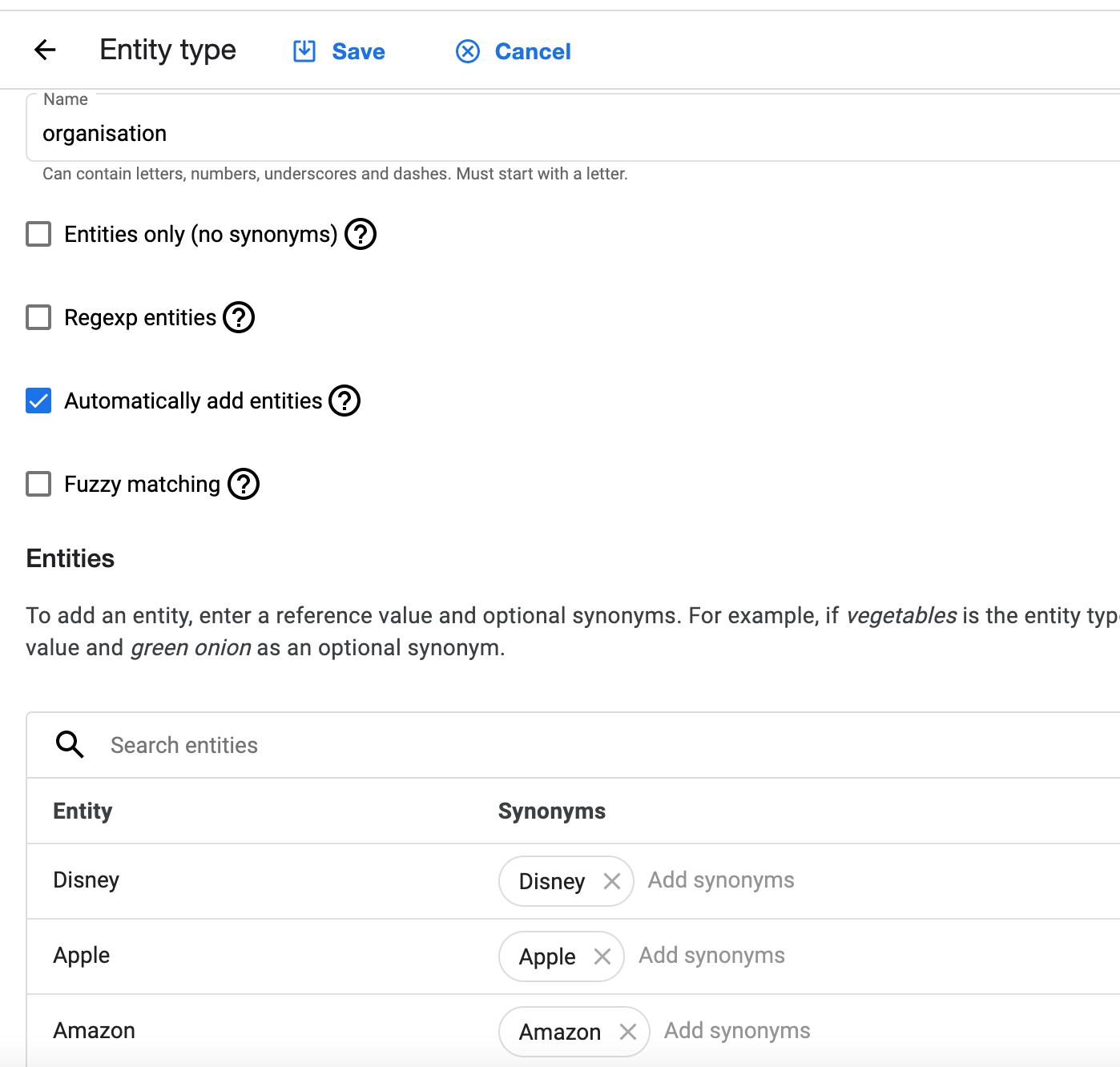

In Google Dialogflow, you can use Automated expansion's functionality, which enables automated expansion for a custom entity type. When enabled, your agent can recognize values that have not been explicitly provided. In addition to that, you can apply Fuzzy matching and Regexp entities.

And with Amazon Lex, you can use the default functionality of slots. In addition to that, you can try to use the Amazon Comprehend service.

Still, you would find that there are some limitations in defining the entities, and the confidence of recognition can vary. The point here is that you can predefine all possible entities in some simple cases, and your bot can recognize them with the highest confidence. But in some cases, when you can't predict all possible examples of the entities, you would need to find a way to utilize each platform's functionality for automatic recognition. There are also available separate services that can help you identifying entities and relationships unique to your industry in unstructured text. One of such services is IBM Watson Knowledge Studio.

It would help if you also remembered that some context variables (entity values) are verified by default. For example, if it is a date, it is verified by default, meaning you do not need to perform additional steps to verify if you can use the value from that context variable. With the customer name case, we need to perform additional validation, it is not mandatory, but for building a better user experience, we performed this step in all our projects.

Step 3 - Verify entities (optional)

Why we need this step? In our example, the user can provide any customer name, and we need to verify it exists on the backend side. Assuming that you are using some enterprise software where you have the register of all the customers. So before you move forward with a conversational dialog with the user, you would need to take the value of the customer name's context variable and check it on the backend.

If you do not perform this action, you will guide the user till the end of the conversation, and when you try to create a time activity in your backend software, you will get the error that such a customer doesn't exist. For the user, it means that he or she needs to repeat the all process again. They also need to go to enterprise software, check the customer's exact name, and then try again. This is not APPLICABLE! It is a worse situation that you can face while you are designing a bot.

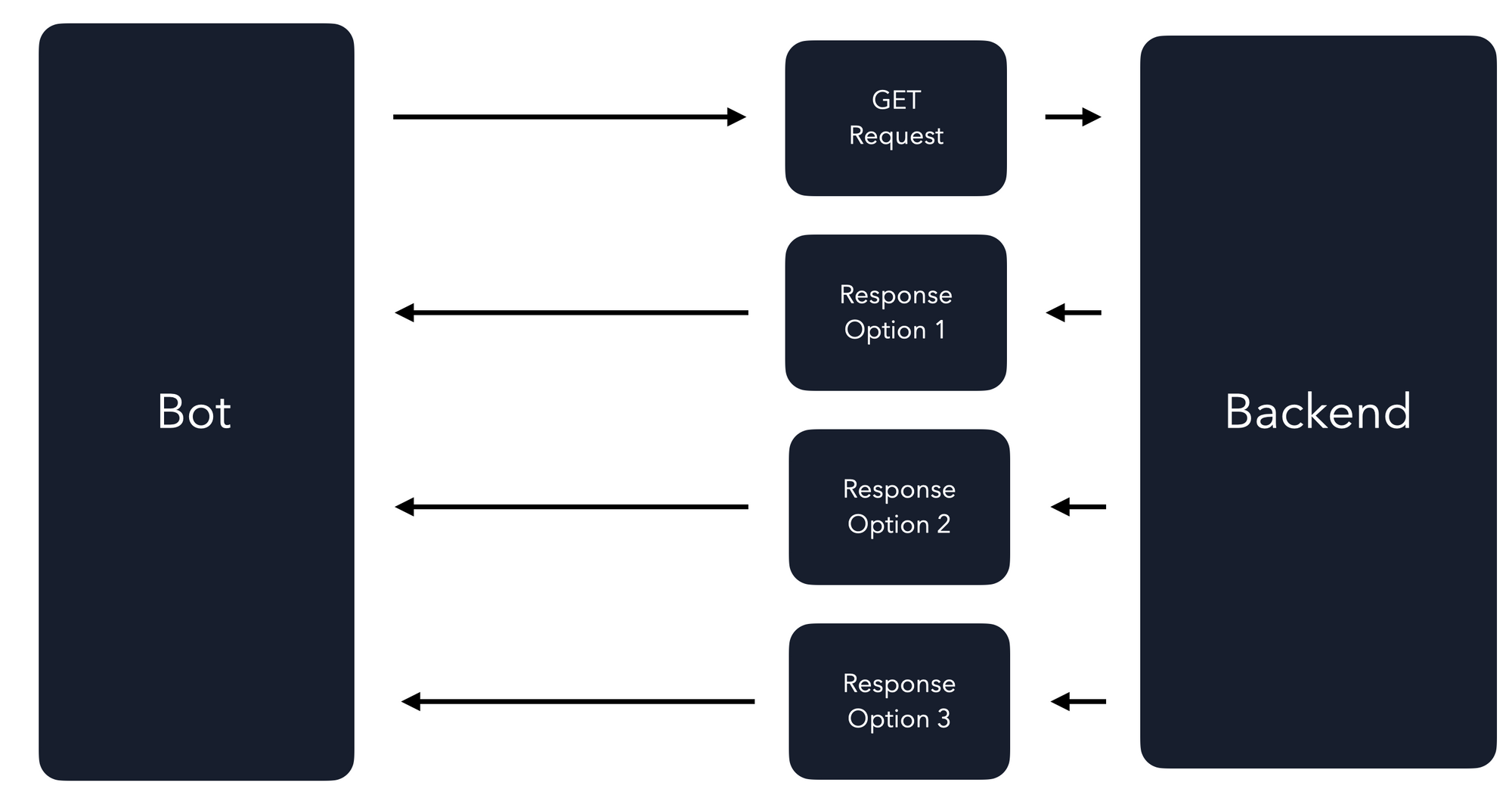

So now you need to send the API to the backend to verify if the customer exists. I will describe technical integration options with the backend via APIs in another article if you would be interested. Still, I want to describe the challenges you can face while sending the data to the backend.

When you send the API to the backend to validate if the customers' name exists on the backend side, you would need to evaluate at least three different response types and design the conversation flows to handle each of them.

Option 1 - There is one to one match. You found a customer. So all good. You are sure that customers exist, and you can move forward with the user to create a time activity.

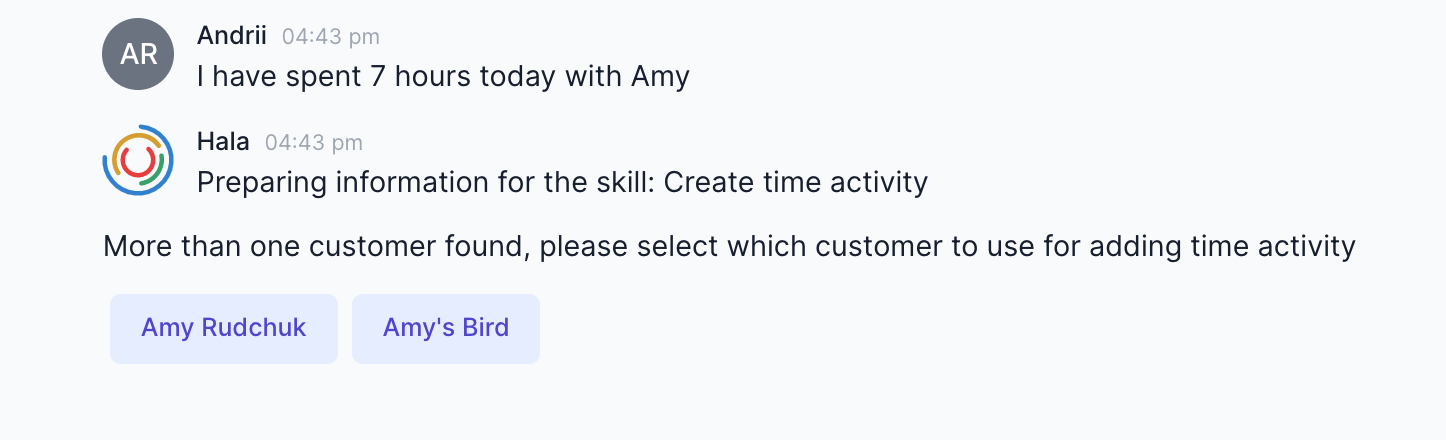

Option 2 - There is a One-to-many match. You found more than one customer. In such cases, we are proposing to the user to choose which customer should be taken. We can do this because you will get the information about those customers, and you can display to the user quick reply buttons. After that user can select the customer, and you can move forward.

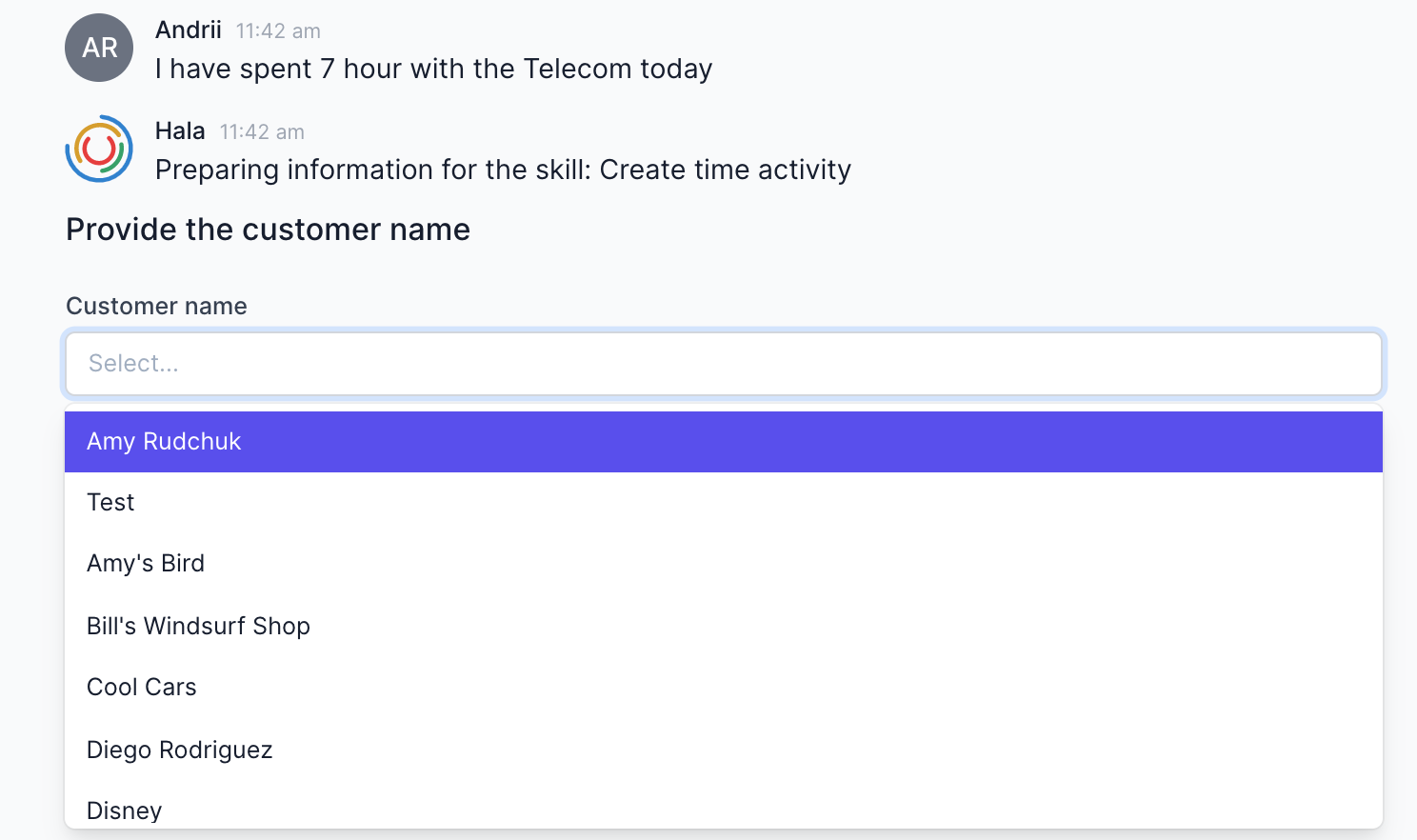

Option 3 - You can get the empty response, meaning that no customer matches the context variable value. Now you can propose to the user to select the customer from the entire list of customers.

Also, you can evaluate few more responses from the backend: Case#1 - When we receive the error from the backend and Case#2 - When the integration with the backend is offline.

To be continued ...